Moral public goods are a big deal for whether we get a good future

Citations

23rd February 2026

Short summary

A moral public good is something many people want to exist for moral reasons—for example, people might value poverty reduction in distant countries or an end to factory farming.

If future people care somewhat about moral public goods, but care more about idiosyncratic selfish goods, then there may be significant gains from them coordinating to fund moral public goods. Even though it’s in each individual's personal interests to fund selfish goods, everyone is better off if they all switch to funding moral public goods.

Ensuring that this coordination happens seems potentially very important for how well the future goes.

We tentatively think that this argument suggests distributing power relatively widely (so that there are more gains from trade), while improving our ability to coordinate to fund moral public goods. It also suggests encouraging evidential cooperation in large worlds (ECL).

Long summary

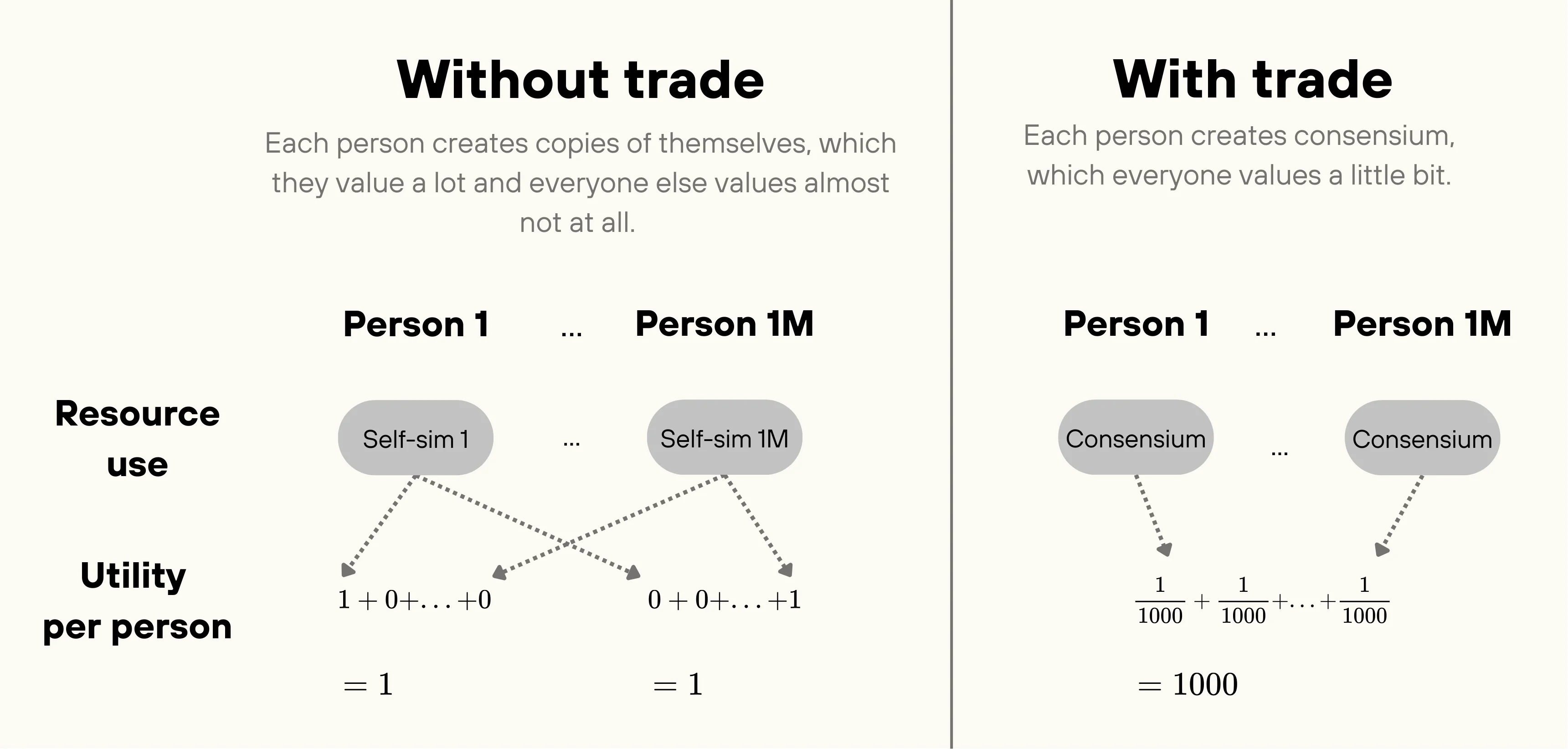

Suppose that after the intelligence explosion there's a society of a million people each deciding what to do with a distant galaxy they own. Every person can use their resources to either simulate themselves (“self-sims”) or create something that everyone values, perhaps hedonium or civilizations of happy, flourishing people (“consensium”1). Assume for now that they value both goods linearly, but value their own self-sims a thousand times as much as consensium and value others’ self-sims negligibly.

Absent trade, everyone spends all their resources on self-sims. But they could instead agree to spend everything on consensium. Although they value consensium a thousand times less than self-sims, they get a million times as much of it by participating in the trade—a thousand-fold increase in value by each person’s lights!

In general terms, rather than each party pursuing idiosyncratic goods (valued only by them), everyone agrees to pursue consensus goods (valued by everyone). This is a form of moral trade, which might have especially large gains from trade when people have linear preferences in both idiosyncratic and consensus goods. We’re excited about this both because we think that linear preferences are reasonably likely and because we think that other methods of moral trade work less well when all participants have linear preferences.2

Consensium is a type of public good. Everyone derives value from the existence of consensium, whether or not they contributed to funding it. We call goods like consensium moral public goods.

Image

We’ve presented a stylized trade-off between something totally particular (“self-sims”) and something totally universal (“consensium”). In practice, there's probably a spectrum.3 Mutually beneficial trades can occur anywhere along this spectrum, whenever people shift resources from more idiosyncratic to more widely valued goods.

Of course, this requires that people have both idiosyncratic and consensus goals. It’s not totally clear that this will be true. Maybe everyone’s values will fully converge, and they’ll spend all their resources pursuing those shared values, without any need for trade. Or maybe everyone’s values will entirely diverge, leaving them with no shared goals at all. In that case, coordinating on moral public goods isn’t possible.

But we think it's reasonably likely that people will continue to have both idiosyncratic and widely shared preferences. If so, these trades could matter a lot for whether the future goes well.

Some strategic implications:

- Distribute power widely.4 The more people who share power, the greater the gains from trade, and the more likely that people switch from funding idiosyncratic goods to consensus goods. So this is a general argument in favour of distributing power as widely as possible, as long as large-scale coordination is possible—which we think is doable via taxation.

- But avoid highly fragmented governance. You only get to capture these large gains from trade if you’re actually able to coordinate. This speaks against highly decentralized approaches—whether libertarian futures where individuals have total control of their own resources, or massively multipolar worlds with millions of independent polities and no mechanism to compel contributions. Funding public goods is hard because everyone has a strong incentive to free-ride: in the toy example, each person prefers that everyone else switch to consensium while they keep funding self-simulations. Historically, the scalable method for funding public goods has been governments that force individuals to contribute.Combining this point with the previous point, moral public goods are most likely to be funded if power is broadly distributed but the government can tax people to fund consensus goods that they vote for.5

- Develop voluntary mechanisms for funding moral public goods. Coordination technology might eventually solve the free-rider problem and allow people to make deals to fund moral public goods without government coercion. We're excited about research in this direction, though we think the free-rider problem is surprisingly hard to escape.

- Encourage ECL. Evidential Cooperation in Large Worlds (ECL)6 combines evidential decision theory7 with the notion that the multiverse may contain huge numbers of agents with decision procedures correlated with yours.ECL plausibly provides a very strong mechanism for funding moral public goods. If you shift $1 from something only you value to something valued by all correlated agents, they do the same. This gets you a large increase in consensus goods for a small sacrifice of idiosyncratic goods—a great deal by your lights. With many correlated agents who have diverse idiosyncratic values but share your consensus goals, the multiplier is potentially huge (e.g. of consensium for each $1 you move away from self-sims).

- It might matter less how much people prioritize consensus goods, and more what those consensus goods actually are. In the past, we've worried that even if there’s widespread moral convergence, people might still prioritize other goals like personal consumption, status competitions, or idiosyncratic ideological projects. But the argument above suggests that if enough people care about a goal even a little bit, they'll shift all their spending toward it. The difference between a very “selfish” person (who cares very little about consensus goods) and a very “altruistic” one (who cares a lot) might not matter so much, as long as everyone cares at least a bit.What does matter is what those consensus goals actually are. There could be substantial differences in value—by our lights—between different conceptions of pleasure, beauty, well-being, or consciousness. And there are potential consensus goals that would be bad or valueless, like sadism or nothingness.

One important qualification: our toy example assumed that people value both idiosyncratic and consensus goods linearly. We're massively uncertain what the structure of people's preferences will look like in the long run, and so we’re uncertain about our conclusions. We checked whether our results held across various classes of plausible-seeming utility functions and, for most of them, coordination and distribution of power were helpful for increasing spending on consensus goods.

But there are plausible utility functions where these results don't hold. For example, human behavior today can be modeled by preferences that allocate a fixed fraction of resources to each type of good, regardless of price.8 Under those preferences, a coordination mechanism that effectively makes consensium cheaper wouldn't actually get people to spend more on it. And for some utility functions, broadening the distribution of resources can actually decrease spending on consensus goods, even when coordination is possible.

The structure of the rest of the note is as follows:

- We define moral public goods, and clarify their relationship to moral trade.

- We first assume a specific model of people’s values (where idiosyncratic and consensus preferences are both linear). We show that, in the context of causal trades, moral public goods get the most funding if resources are widely distributed and coordination is possible. We discuss specific mechanisms to enable coordination on moral public goods, including government taxation, social norms, and voluntary deals.

- Next, we turn to acausal coordination and argue that evidential cooperation in large worlds (ECL) is very well-suited for funding consensus goods.

- Then we consider how robust our arguments are to our assumptions that people will have linear preferences.

- Finally, we assess how valuable spending on moral public goods would actually be.

What are moral public goods?

The consensium example from above illustrates a general dynamic that Paul Christiano calls a “moral public good.” Many people may value some goods for moral reasons. No one values the good enough to fund it themselves, but it’s in everyone’s collective interest to fund it. As far as we’re aware, the dynamic was first identified by Milton Friedman,9 and developed further by other economists.10 Moral public goods are different from other public goods in that people don’t personally benefit from the good. Instead, they just care intrinsically about the good existing.

Examples of moral public goods might include existential risk mitigation, poverty relief, environmental protection, art creation, scientific inquiry, and animal welfare improvements. (Although often these are regular public goods, too, since people derive personal benefit from many of these goods. We acknowledge that the distinction is somewhat fuzzy and many people will derive both a personal and moral benefit from the same good—you might personally value not dying in an extinction event and morally value the existence of future people.11)

Just like other public goods, moral public goods are liable to be underfunded,12 because of the free-rider problem: everyone prefers paying their share over not getting the good at all, but they prefer even more to let others fund it while they get to keep their money. We currently solve this coordination problem by governments collecting taxes and spending the proceeds on consensus goods.

We think that public goods, and whether we coordinate to fund them, might be very important for how good the long-run future is. In the future, people may have the opportunity to allocate resources in distant galaxies that they will never personally visit. For those decisions, most of the benefit a decision-maker can derive is moral or ideological, not personal. Thus, we think coordination on shared moral goals is especially important.

How does this relate to moral trade?

Trade over moral public goods is an example of moral trade.

Classic cases of moral trade often focus on people trading over idiosyncratic moral preferences. For example, consider two people who each control a galaxy’s resources. One person cares about hedonic pleasure while the other cares about freedom. Left to their own devices, the freedom lover would create a society where everyone is perfectly free, while the hedonic utilitarian would create one where everyone is maximally blissful. But there's an opportunity for trade. The hedonic utilitarian could tweak their society to increase freedom at low cost to pleasure, while the freedom lover could look for ways to increase pleasure without significantly compromising freedom. Both get more of what they want.

This is nice, but the gains seem fairly limited when both parties are trading idiosyncratic goods that they both value linearly. With just two trading partners, even in the most optimistic case—where each party achieves 99.999% of their possible value in both galaxies—trade only gives you a 2x multiplier on value. If you wanted 100x gains from trade, you would need to find a hybrid good that was simultaneously nearly optimal for 100 different value systems. We wouldn't expect one to exist in most cases.

The moral public goods case, in contrast, is a moral trade where people agree to shift resources from idiosyncratic preferences that they individually value highly to consensus preferences that everyone values a little.

Coordinating on moral public goods works especially well when everyone has preferences that are linear in resources (see below)—exactly the case where the gains from coordinating on hybrid goods seem especially limited. It's also easier to scale to huge numbers of trading partners, since everyone just produces whatever best satisfies their shared values rather than needing to find hybrid goods that satisfy many value systems. This scalability matters because gains from trade grow with the number of participants: in our toy example in the summary, a million people coordinating on something they all valued a tiny bit yielded 1000x gains from trade.

The downside of coordinating on moral public goods is that it does require a large number of people to share some consensus preferences. This might not always be true (see below). But when such shared preferences do exist, we expect coordination on moral public goods to yield larger gains from trade than coordination on hybrid goods, at least when there are many participants with linear preferences.

Scenario 1: causal coordination

For now, we’ll assume that beings with decision-making power have quasilinear preferences over three types of goods. First, there are some goods that they value for self-interested reasons, like food, shelter, and luxuries for their biological self, which exhibit steeply diminishing returns. We’ll call these goods basics. Second, there are some goods that they value for idiosyncratic reasons, which have linear utility. These could include simulations of themselves or people living according to their own culture. We’ll call these goods self-sims. Finally, there are some goods that everyone values linearly. This could be new civilizations crammed with flourishing, joy, adventure, connection, beauty, and so on. We’ll call these goods consensium. Everyone values consensium, but no one values anyone else’s basics or self-sims.

To help us illustrate more concretely, we’ll assume a particular utility function, with and representing each person’s basics and self-sims, respectively, and representing consensium:

That is: people care a lot about basic goods, but get diminishing utility from them, they care quite a lot about self-sims, and they care only a tiny bit about consensium.

Given this utility function, how do people spend their wealth? Consider three different scenarios. In each scenario, we’ll assume the price of each good is $1, total wealth of $100T, and there are 10B people. (The precise numbers don’t matter; this is just to illustrate.)

| Scenario | Basics | Self-sims | Consensium |

|---|---|---|---|

| Single decision-maker controls all resources13 | $400 | $100T – $400 | $0 |

| Resources divided evenly among 10B people, no coordination14 | $4T | $96T | $0 |

| Resources divided evenly among 10B people and they coordinate15 | $1T | $0 | $99T |

The key qualitative upshot is this: with good coordination and widely distributed resources, the effective price of the consensus goods drops dramatically. Every $1 you spend on consensium results in $10B going towards it—a 99.99999999% discount.16 On this model, people buy vastly more consensium, both absolutely and as a share of their budget, than in either the dictatorial or uncoordinated scenario.

This argument suggests we should try to ensure both widely distributed power and good coordination mechanisms for funding public goods.

How widely does power need to be distributed? This depends on how much you expect people to value idiosyncratic goods relative to consensus goods. In our example above, each person valued self-sims 5 billion times as much as they valued consensium, so we needed at least 5 billion people for consensium to get funded at all.

We’re quite uncertain about how much people will value idiosyncratic goods relative to consensus goods. We tentatively think that ratios of a few thousand or a few million seem quite plausible and ratios as high as a few billion are somewhat plausible, so distributing power across thousands, millions, or even billions of people could be valuable.17

How to coordinate causally

There are three approaches to funding public goods that might work for moral public goods after the singularity: governments, social norms, and voluntary contracts.

Today, public goods are funded primarily by governments. Governments force everyone to contribute to public goods, regardless of whether they actually value the good. Even in a democracy, a minority’s preferred public goods might go unfunded, while their taxes pay for goods they're indifferent to. It would be better if there were a way to allow arbitrary combinations of individuals to coordinate and fund the goods they collectively value, without forcing contributions from those who do not value the good.

We were initially optimistic that this would be possible through voluntary contracts. After all, it's in everyone's collective interest to get these goods funded, and we expect that artificial superintelligence (ASI) will be able to resolve some barriers to coordination that prevent mutually beneficial deals today, like transaction costs or difficulties making credible commitments. But it seems surprisingly difficult to get around the free-rider problem. Advanced technology might even open up new ways to free-ride, like self-modifying so that you no longer value the moral public good (see Appendix B for more details on funding moral public goods via voluntary contracts).

Another approach to funding public goods is social norms. Individuals contribute to public goods to avoid social sanctions, win praise from their peers, or just to live up to their own self-conception as cooperative and norm-abiding. We’re relatively pessimistic about this approach because it seems less scalable and less flexible than either governments or voluntary contracts. Social pressure is probably most effective within social communities, which might cap out the hundreds or thousands. Communities of this size might not include all the people that you’d want to coordinate with. Also, social norms may not be targeted towards funding moral public goods rather than more arbitrary goals. Lastly, social norms also emerge organically, making their terms harder to renegotiate if they prescribe excessively harsh punishments or the wrong level of contributions from individuals.

Some other historical mechanisms for funding public goods make use of them being (partially) excludable.18 But moral public goods are entirely non-excludable: once the good exists, each person who wanted it now benefits.

Scenario 2: ECL

We might also be able to fund moral public goods through acausal coordination. This section presents one proposal for such coordination, drawing on the idea of evidential cooperation in large worlds (ECL). A core premise of ECL is that there are likely many causally disconnected agents—in civilizations inside our universe but outside our lightcone, civilizations in different Everett branches, or civilizations in other parts of the Tegmark IV multiverse. Each of these agents faces a choice about how to allocate their resources: toward idiosyncratic goods valued only by them, or toward consensus goods that many beings throughout the multiverse would value. We can't causally affect their decisions, but our own choice—whether to fund consensus goods over idiosyncratic ones—provides evidence about what other agents with sufficiently similar decision procedures will choose.

To illustrate, let's return to our toy example where each agent cares about one idiosyncratic good (self-sims) and one consensus good (consensium):

- If an agent spends $1 on self-sims, they get evidence that huge numbers of other agents spend on self-sims. But they only value another agent's self-sims if that agent is an exact copy of them.19 There are some agents who are exact copies—it's a big multiverse—but most of the agents correlated with them aren't exact copies, so those self-sims are worthless to the original agent. Their dollar is matched only by their copies.

- If an agent spends $1 on consensium, they get evidence that all those correlated agents shift $1 to consensium too. Unlike self-sims, they care about consensium created by any of those agents. Their dollar is thus matched across the multiverse by anyone whose decision is sufficiently correlated with theirs.

Whether this trade is worthwhile from an agent's perspective depends on the following ratio:

This ratio determines the multiplier they get from coordinating with everyone funding consensium. If the multiplier is large enough to overcome the lower value they place on consensium relative to self-sims, the trade is worthwhile.

(Actually, you should weight each agent by the degree of correlation, but the above formula ignores that for simplicity.20)

There are many possible trading partners. There are astronomical numbers of possible human genomes and even humans with the same genome might diverge due to different life histories. And there are many other possible minds that we could cooperate with—alien intelligences, AIs, and whatever else might exist.

If your idiosyncratic values are indexical—you only care about your personal consumption —then you’ll share those values with none of your possible trading partners. But your decision gives you some evidence about what those others decide. The evidence doesn’t even need to be that strong to be significant. Even a 1% correlation could matter a lot when multiplied across huge numbers of potential trading partners.

Even if your idiosyncratic values aren't indexical—even if they could in principle be shared by agents outside your lightcone—the multipliers might still be large. The space of possible idiosyncratic values is vast. Some agents will share your decision procedure but have different idiosyncratic values. (The authors of this piece disagree about how tightly linked these are in practice, and therefore disagree about the magnitude of the multiplier.)

The ECL case differs from the causal case in several important ways.

First, ECL removes the incentive to free-ride. In the causal story, each agent wants everyone else to fund consensus goods while they buy idiosyncratic goods. Under ECL, this isn't an option. If an agent buys idiosyncratic goods, so does everyone else correlated with them. Thus, the agent is incentivized to pay for consensus goods even without central enforcement.

And with ECL, funding for consensus goods is much less sensitive to the distribution of power on Earth. In the causal case, we only got large "discounts" on consensus goods if power was widely distributed; a single dictator preferred to just fund idiosyncratic goods. But with ECL, even a world dictator gets massive "discounts" on consensus goods from coordinating with others in the multiverse.

Of course, unlike the causal case, whether consensus goods get funded depends on whether agents want to do acausal cooperation at all—which depends on their decision theories and their beliefs about their degree of correlation with others.

Robustness to different structures of preferences

So far we have mostly assumed that people value consensus and idiosyncratic goods linearly. We think that this is plausible. After ASI, people will be extremely wealthy. If they have any linear preferences at all, their spending will mostly be determined by those preferences, since they'll quickly saturate their sublinear ones. And there are theoretical arguments for having linear preferences.21 Meanwhile, people with sublinear preferences may end up controlling few resources—they'd be less willing to adopt riskier but higher-reward strategies, like trading away guaranteed resources near Earth for resources further out in space that might already be occupied. As such, we expect them to trade away most of their resources to people with linear preferences.

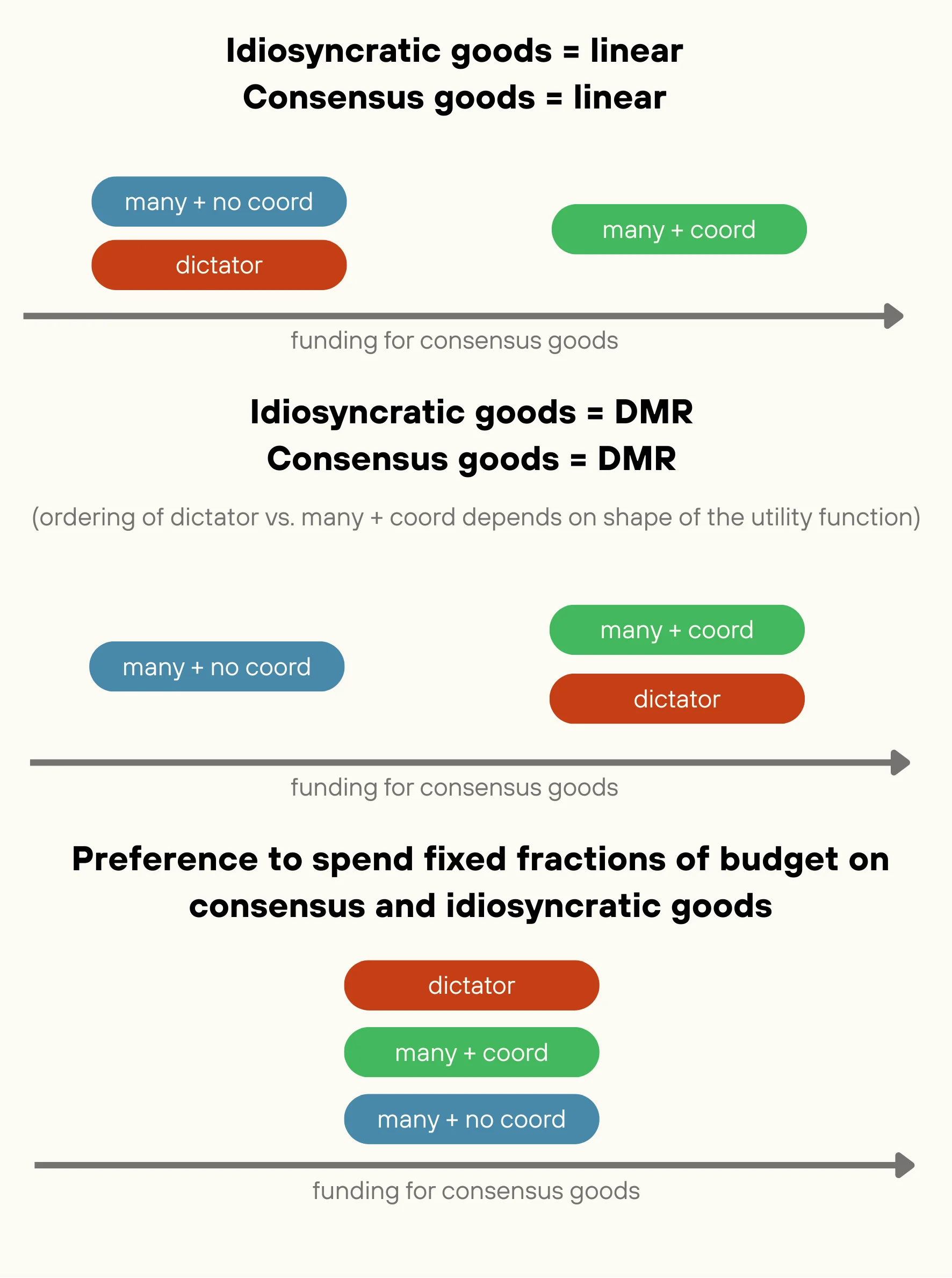

With linear utility functions, we found that many coordinated people fund more public goods than either a single decision-maker or many uncoordinated people, which suggested that both coordination and wider resource distribution increased funding for public goods.

We’re quite uncertain about what preference structures humans will have after the singularity. But we checked whether these conclusions held for a few other utility functions that seemed plausible to us. Among the preference structures we checked, enabling coordination was always helpful (or at least not harmful) for increasing spending on consensus goods. However, broadening the distribution of power was sometimes actively counterproductive.

We're quite uncertain about what preference structures humans will have after the singularity, and it's very possible we're missing a common form that future preferences will take. So we remain pretty unsure about the generality of our conclusions.

Image

With that caveat in mind, here are the other preference structures we checked:

- Preferences with diminishing marginal returns in idiosyncratic and consensus goods. Someone might value many goods—idiosyncratic and consensus—each with its own rate of diminishing marginal returns (DMR). They'll shift marginal spending from idiosyncratic to consensus goods based on the relative marginal returns. Coordination essentially increases the marginal returns on consensus goods by a constant factor (the number of people coordinating), which can shift more spending into consensus goods. So, as in the linear case, coordination is pretty robustly good: it increases, or at least doesn't decrease, spending on public goods.However, in the absence of coordination, widely distributing resources can actually reduce spending on consensus goods. Compare a dictator holding all the resources to uncoordinated people, each with of the resources. The dictator will be able to spend more in absolute terms on idiosyncratic consumption, so they experience much lower marginal returns on that consumption and are correspondingly more willing to shift funding toward consensus spending. Intuitively, a single person's idiosyncratic desires saturate faster than people's combined desires, freeing up more resources for consensus goods.So more public goods get funded in a world with a single decision-maker and a world with many coordinated decision-makers, compared to a world with many uncoordinated decision-makers. How does the coordinated multipolar world and the single decision-maker world compare?It depends on the precise shape of the utility function. For some DMR functions—like or —many coordinated people fund more public goods than single dictators (where is the amount of resources spent on idiosyncratic goods). Here the boost from the coordination matters more than the hit from having to fund many people’s idiosyncratic goods. For other DMR utility functions—e.g., for some constant threshold —dictators may fund more consensus goods. See Appendix A for more details.(These same conclusions largely apply if someone values consensus goods linearly and has DMR in idiosyncratic goods (or vice versa).)

- Preferences to spend fixed fractions of resources on consensus and idiosyncratic goods, regardless of price. This matches how people today typically allocate resources. Even when people learn that certain charities achieve huge amounts of good per dollar, they very rarely reallocate spending between idiosyncratic and consensus goods. This suggests they are not price-sensitive, but rather spend a fixed fraction of their resources on consensus goods regardless of how effectively those resources can be deployed.(You can also get this spending pattern if you model a human as containing two sub-agents (one that cares only about idiosyncratic goods, one that cares only about consensus goods) and these sub-agents bargain to determine the human’s actions.22)With this utility function, cheaper public goods make no difference to allocation and coordination doesn’t help. Resource distribution also doesn’t matter—each individual spends the same share of resources on consensus and idiosyncratic goods regardless of how many resources they control.

Convergence and moral public goods funding

Coordination to fund moral public goods isn’t possible if there's full convergence or full divergence. If everyone's values fully converge, they'll spend all their resources pursuing shared goals without any need for trade. If everyone's values fully diverge, there are no shared goals to coordinate on in the first place.

But if a group shares some consensus preferences while retaining different idiosyncratic ones, coordination to shift funding from idiosyncratic goods to consensus goods is possible. Gains from trade are largest if there's widespread convergence on consensus goals. But even with limited convergence, any subset of people with shared consensus goals can still benefit by trading among themselves.

How valuable is it to fund moral public goods?

This depends on how valuable the consensus goods are.

On subjectivism, if there's widespread convergence, most people will end up valuing those consensus goods—so unless you expect your values to substantially diverge from most people's on reflection, this should be great by your lights. Things are less clear if you expect low convergence, or if you expect to be in the minority. You'll still benefit from coordinating with others who share some consensus goals with you, but other coalitions might fund goods you dislike.

For example, people might coordinate on excessively punishing wrongdoers (negative value) or leaving large swathes of space as nature preserves (zero value), when we would have preferred that they hadn’t coordinated at all and instead funded personal consumption (weak positive value). But we don’t expect that this effect dominates because in general most people’s values aren’t directly opposed.

Another issue is threats. Just as coordination lets a group do more with a fixed budget by funding shared goals rather than idiosyncratic ones, it might also make it easier to threaten that group with something they all dislike. We don't think this will leave the threatened parties worse off on net by their own lights, but it might be bad for more downside-focused agents. They bear the risk of threats against their values without as much of the corresponding upside.

Thus far we’ve argued that coordination will improve the value of the future by most people’s lights. But if moral realism is correct, then we should ask whether coordination will lead to the objectively best use of resources. There’s some reason for optimism here: under moral realism, lots of people might place at least some value on the impartially best use of resources, making that a very broadly appealing good.

But it’s unclear that people will coordinate to fund the most broadly appealing goods. People have a range of preferences that vary in how particular or universal they are. Moral public goods mechanisms can shift funding from satisfying more idiosyncratic preferences to more widespread ones—but they don’t necessarily fund the most universal preferences. For some people, the largest gains from trade might come from coordinating with a smaller group with especially similar preferences. If a nationalist values national benefit 100x more than consensium, then they’d rather coordinate with 1 billion fellow nationalists than 10 billion people globally.23

And even if the most broadly appealing goods are funded, they might not be the objectively best use of resources. For example, humans might especially value the wellbeing of human-like minds. If coordination is only among humans, then public goods funding might flow toward creating societies of happy humans, even if non-human minds could experience more joy, freedom, or fulfillment per unit resource.

This last concern seems more serious for causal than for acausal coordination. Causal coordination will be limited to humans and AIs originating from Earth. Acausal coordination could involve a much wider variety of minds—aliens with very different biologies and civilizational histories. If we're correlated with them, then we're more likely to end up funding goods that are broadly appealing to all these types of minds, which are more likely to be the morally correct use of resources. But it’s possible that civilizations capable of ECL will tend to share similar values—maybe preferences for stuff that’s instrumentally useful like survival, growth, and knowledge—even if those aren’t objectively valuable.

Conclusion

If large numbers of agents can coordinate to fund goods they all value, this can produce substantial gains from trade. These gains are potentially large enough that even quite selfish actors would devote significant resources to consensus goods. We're excited about this type of trade because it could enable a near-best future by channeling substantial resources toward widely valued goods, even without any single agent heavily prioritizing those goods. This conclusion is most clear-cut when agents have linear utility functions, but probably extends to other plausible utility functions (some utility functions with diminishing returns).

These benefits depend on there being a sufficient number of agents who share some consensus goals, who are able to coordinate. In the causal case, we’re most optimistic about coordination to fund consensus goods if power is widely distributed and there are governments that can collect taxes to fund public goods. We’re excited about further research on voluntary coordination methods, but they will have to deal with incentives to free-ride and/or strategically modify one’s own preferences. In the acausal case, ECL enables large trading coalitions even if there’s extreme power concentration on Earth and eliminates free-rider problems.

Appendix A: utility function with DMR in idiosyncratic goods and/or consensus goods

Suppose that there are people and total world resources . Let’s first consider the case where each person’s utility function is , where and are the resources spent on (respectively) idiosyncratic goods and consensus goods, and , , and are constants, with and .

In the multipolar and uncoordinated case, each person has to spend. Let’s say that is their spending on the consensus good and is the sum of everyone else’s spending. Since is their spending on the idiosyncratic good, their utility function in terms of will be:

This is maximized when (set ):

If everyone spends the same amount on consensus goods, then . Then, the total amount spent on consensus goods is such that:

In the dictator case, the dictator will allocate their resources so that , with . Then, the total amount spent on consensus goods will be , where:

This is identical to the constraint for the multipolar and uncoordinated case, except for a factor of on the LHS. The LHS reflects marginal benefit from spending on idiosyncratic goods. It's higher in the multipolar and uncoordinated case because—for a given level of total spending on —resources are spread across more individuals, and so each person spends less on idiosyncratic goods and faces steeper marginal returns.

In the multipolar and coordinated case, everyone can coordinate to spend on the consensus good—so the consensus good effectively becomes times cheaper relative to each person’s idiosyncratic good. You only need to give up $1 of idiosyncratic goods to gain $ of consensus goods.

So if each person spends on consensus goods and , then each person’s utility function is effectively:

If everyone spends the same amount on consensus goods, then . Then, the equilibrium amount spent on consensus goods is satisfying:

This is identical to the constraint for the multipolar and uncoordinated case, except for a factor of on the RHS, which reflects how for each dollar someone spends on consensus goods, dollars are spent in total because of the coordination.

Summing up, the conditions for each case are:

- Multipolar and uncoordinated case:

- Dictator case:

- Multipolar and coordinated case:

Based on these constraints, .24

Spending on consensus goods is lowest when power is dispersed among many people who cannot coordinate. Intuitively, each person has fewer resources, so a larger share of each person’s resources go to satisfying their idiosyncratic preferences. Dictators have more resources and are able to allocate a larger total share to consensus preferences after satiating their idiosyncratic preferences. When power is widely distributed and there’s coordination, then each person experiences a “multiplier” on spending on consensus goods. There’s still the effect where each person has fewer resources total and thus has greater returns on increasing their spending on idiosyncratic preferences, but it’s counterbalanced by the greater multiplier—at least for this class of utility functions.

It’s also possible—though less plausible to us—that people might have diminishing returns in consensus goods and linear returns in idiosyncratic goods. In that case, just like the case where both types of preferences are linear.26

So, for these utility functions, the ideal situation is that resources are dispersed among many people and those people are able to coordinate with each other. And if coordination is not possible, public goods are better funded if resources are concentrated.

Does this conclusion hold for all functions where there are diminishing marginal returns on idiosyncratic and/or consensus spending?

There are two effects that we expect hold pretty broadly:

- Coordination lowers the effective price of consensus goods by a factor of . Thus coordination should increase spending on consensus goods (or at least not decrease it—coordination might have zero effect if people place sufficiently low value on consensus goods). So, more funding will go to moral public goods in the multipolar and coordinated case compared to the multipolar and uncoordinated case.

- But if resources are widely distributed, then each person will have a lower level of spending on idiosyncratic goods and face greater marginal returns from increased spending there. So, more funding will go to moral public goods in the dictator case compared to the multipolar and uncoordinated case.

The comparison between the multipolar and coordinated case and the dictator case depend on how these two effects balance out. For the DMR utility functions we’ve looked at so far, the first effect wins out. But this doesn’t necessarily hold in general. For example, consider the utility function:

A dictator will spend 1 unit of resources to max out their utility from idiosyncratic goods and spend the rest of their resources on consensus goods (so ). But if —if people still prefer to spend on idiosyncratic goods even after the multiplier from coordination—then , because everyone will need to satiate on idiosyncratic goods before buying any consensus goods.

Appendix B: causal coordination through voluntary contracts

We're skeptical that voluntary contracts can robustly fund moral public goods without incentivizing free-riding.

To illustrate this issue, consider assurance contracts, where people agree to contribute to a public good if and only if enough others do too. Each person will be happy to sign if they think it’s sufficiently likely that the deal won’t go through without their participation.

You could design an assurance contract that funds consensium if and only if everyone who cared about consensium agreed to contribute. But this contract is very brittle. If just one person decides not to pay in—maybe irrationally, or maybe because the mechanism-designer misjudged their preferences—then no money will get spent on consensium.

A less brittle alternative mechanism might pay out if (say) 90% of people pay in. But now you have a different problem: each person only pays in if they think there’s a high enough chance that they’re pivotal—a high enough chance to offset the chance that they’re unnecessarily paying into an already-successful deal. This seems tricky, especially if the population is really large. We model these mechanisms in Appendix C and find that assurance contracts usually won’t go through for realistic population sizes (thousands to tens of millions) unless there are extremely large gains from trade.

We've reviewed other proposals for voluntary mechanisms to fund public goods, but they all have similar issues.

Dominant assurance contracts improve slightly on regular assurance contracts by offering a small payment to signers if the contract fails. This makes signing the assurance contract marginally more appealing. But we don’t think that dominant assurance contracts address the fundamental issues described above:

- If it’s a unanimous assurance contract, then these payments make signing strictly dominant instead of weakly dominant. But it was already in everyone’s interests to sign the contract if they placed any credence on everyone else signing! The issue with the unanimous assurance contract was that it was brittle—if just one player failed to sign the contract, then the contract fell through.

- If only 90% of people need to sign the assurance contract, the payment does shift everyone’s incentives mildly in favor of signing. But the expected payment per person must be much smaller than the amount that each person pays into the contract in expectation (otherwise you would presumably use the funds for the extra payments to pay for the public goods directly), so we expect it won’t change the cost/benefit tradeoff of signing by much.

- Another framing is: the main incentive to free-ride comes from the hope that the public good gets funded even without your contribution. But dominance assurance contracts don’t affect this incentive as they only apply if the public good isn’t funded.

Another mechanism for funding public goods is quadratic funding, which entices donors to join by promising to match their donations. But this relies on an outside source of matching funds, and it’s not clear where this would come from, in the absence of a government funded by coercive taxation.

We’re uncertain about whether a suitable voluntary mechanism exists at all. Saijo and Yamato (2010) prove that you cannot design a voluntary mechanism that guarantees that everyone will contribute to fund a non-excludable public good, although proof only applies to some classes of one-shot mechanisms.

We’ve spent less time searching for mechanisms that involve multiple rounds, where people can observe who contributed in previous rounds. It’s possible that there’s something here that works.

Still, we think there’s an even more fundamental problem. The reason the unanimous assurance contract was brittle was that people’s decisions are unpredictable. Among agents with legible preferences and perfect rationality, it might work: the mechanism-designer could reliably offer deals that are in their interests, and everyone offered them would reliably accept.

However, if someone anticipates ahead of time that someone will design a voluntary mechanism to help people coordinate to fund public goods, then it’s in their interests to self-modify so that they no longer care about the good. If they no longer care, they will predictably refuse any deal where they help pay for the good, and so the mechanism-designer won’t include them in the deal. This allows them to free-ride while others fund the public good (that they originally cared about). But of course everyone who initially cares about the public good faces the same incentive, and so they all self-modify and the deal won’t happen.

The mechanism-designer could try to discourage strategic self-modification by pre-committing to offer the deal to everyone who ever cared about the public good (assuming they could verify the history of everyone’s values). Once they made that commitment, it’s no longer in anyone’s interest to self-modify to no longer care about the public good. If they do this, they’ll refuse the deal when it’s offered to them and then the deal won’t happen, which is bad by their pre-modification lights. But now there’s a commitment race between mechanism-designers and potential funders.

And these problems seem potentially quite deep, such that there may be no solution. For this reason, despite the problems we raised with government funding of moral public goods, we currently think it’s the best approach. This provides one reason to maintain a government (or a relatively small number of governments27) with the power to tax citizens and spend on public goods after the singularity. There are other reasons too—for instance, funding other public goods like mitigation of galaxy-scale existential risks.

Appendix C: modeling assurance contracts

In this section, we model one-time assurance contracts that activate if a fraction of people sign them and show that these contracts don’t work unless there are massive gains from trade.

Suppose there are people. Each person values both their own idiosyncratic good (“self-sims”) and a shared consensus good (“consensium”). They value these linearly in resources, but care somewhat less about consensium. That is, their utility function is , where is total spending on person 's self-sims, is total spending on consensium, and .

Each person has an opportunity to sign an assurance contract with the following terms:

- If at least people sign, each signatory pays $1, so a total of gets spent on consensium. (For simplicity, we assume that contributions beyond the threshold are essentially "burned"—spent in a way that's useless to the contributors.)

- If fewer than people sign, no one pays anything.

Everyone simultaneously decides whether or not to sign, without knowing other people’s decisions.

If the contract goes through, the net benefit of signing is and the benefit of not signing is .

There’s no Nash equilibrium where all people sign, because any one person could choose to not sign and benefit by free-riding on the others. There’s a weak Nash equilibrium where no one signs. If , there is a symmetric mixed strategy equilibrium where everyone signs with some probability .

For each person to be willing to randomize, it must be the case that the expected value of signing is the same as the expected value of not signing. That is:

Whether the threshold is met in each case depends on the number of other signatories. Let’s call the number of other signatories , where . Then:

Or, equivalently:

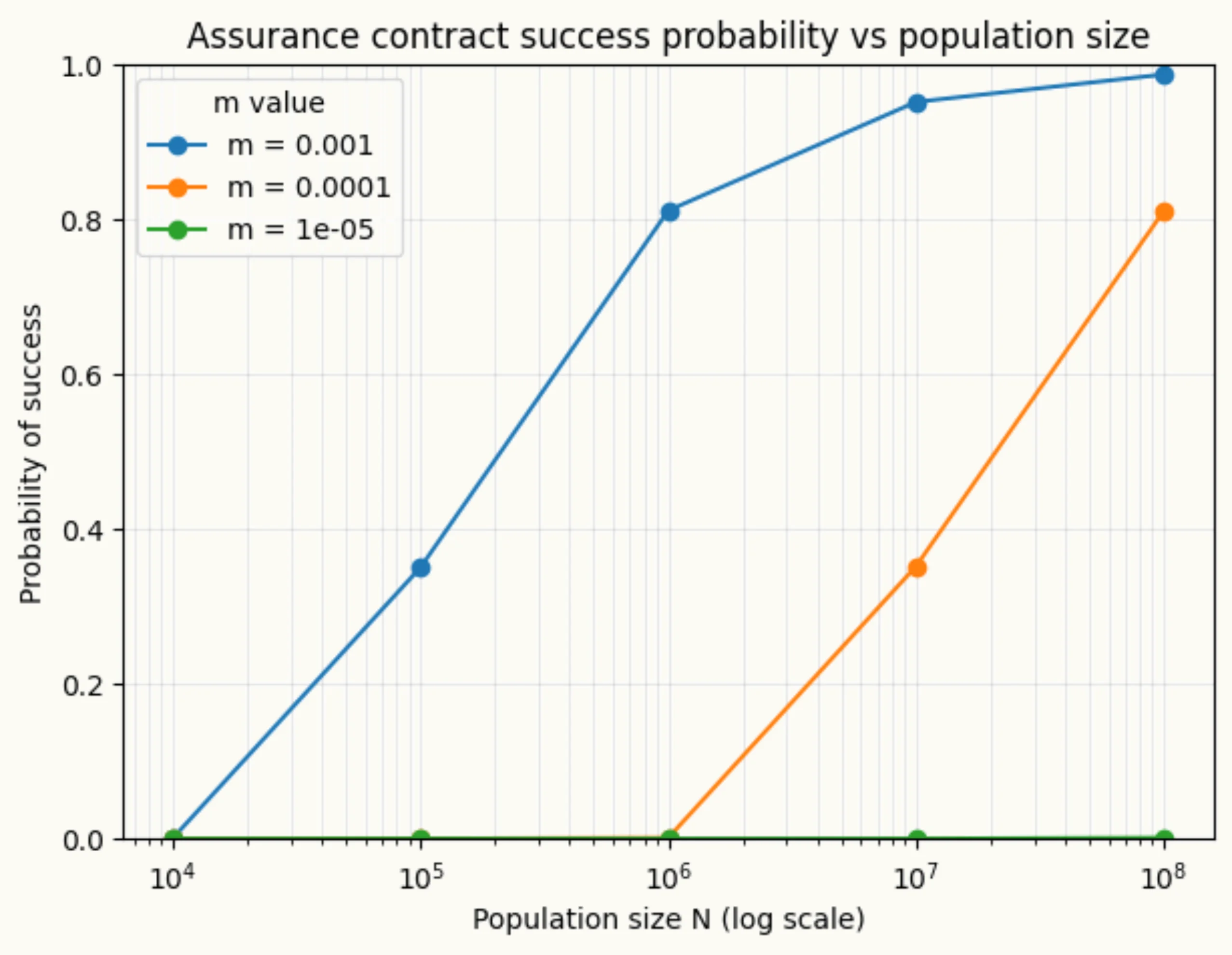

There’s not a general closed form for , so we used numerical methods to find values for and the probability that the threshold number of signatories is reached given different values for and .

For example, consider a toy example with 1000 people, who each value consensium 1% as much as self-sims, and they each have the option to sign an assurance contract to spend $1 on consensium, which will be enacted if at least 90% of them choose to sign. Then we want to find such that:

Solving numerically, this gives us and so the probability that the contract is enacted is .

In the graph below, we plot the probability that the contract is enacted for many sets of values of and .

Image

Probability that at least people will sign an assurance contract, given different values of (population size) and (discount on consensium relative to self-sims). We set ---so 90% of the population has to agree to the deal. For a fixed , deals become more likely as population size increases because the gains from trade grow with .

So, in principle, these types of assurance contracts are feasible for some values of and . But unfortunately, it seems like the gains from trade have to be quite high for deals to be very likely to be signed. For example, for a population of 100 million, if everyone values spending on consensium at 0.1% as spending the same amount on self-sims, then a deal will go through with 99% probability. But if everyone values consensium only 0.001% as much as self-sims, then a deal will go through with just 0.001% probability.

It’s important to note that even in the second case, gains from trade are huge! The benefit of the contract being signed is $900, while the costs to each signatory is just $1. It’s very strongly in everyone’s interest for the contract to be carried out, but because of the free-riding incentive, it almost never gets enough signatories.